| IP개요 |

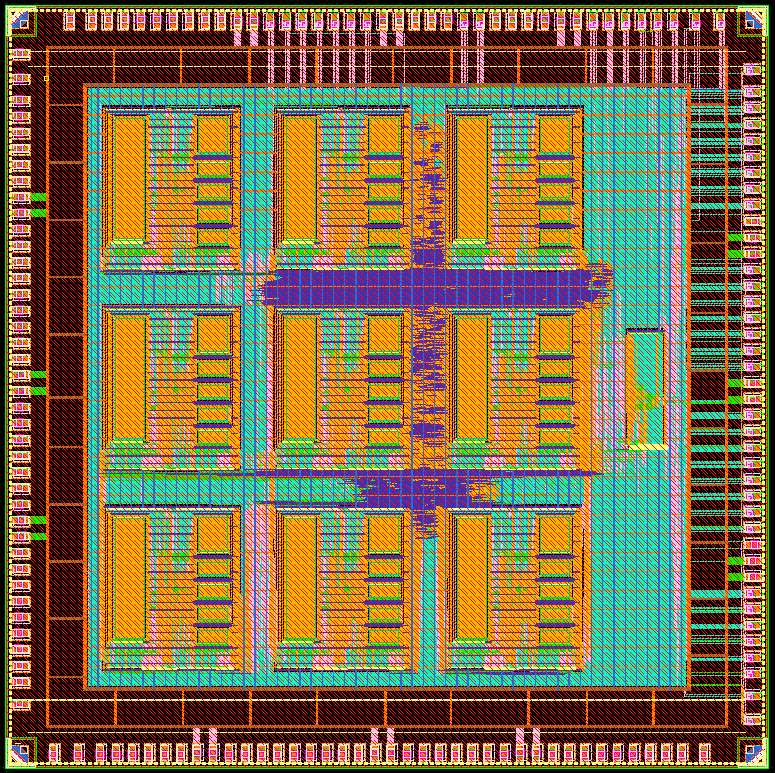

This paper presents a Networked Processor Array (NePA) architecture that addresses the challenges of real-time AI processing by integrating an on-chip communication network with XY-YX adaptive routing and a gateway-based real-time data interface. The proposed system is composed of heterogeneous compute and memory nodes arranged in a 4×4 2D mesh topology. Each node includes a lightweight router employing wormhole switching and a dual-path routing mechanism to mitigate congestion and ensure deadlock-free packet delivery. To facilitate seamless communication with external systems, a real-time interface module is implemented using finite state machine control and handshake-based FIFOs, supporting bidirectional command and data transactions. The architecture supports modular scalability and enables parallel execution of AI workloads with low latency and high throughput. RTL simulations confirm that the system performs reliable packet transmission, command-driven computation, and host interaction as intended. The proposed NePA is suitable for future AI accelerators requiring predictable performance and efficient communication under tight timing constraints. |