| IP개요 |

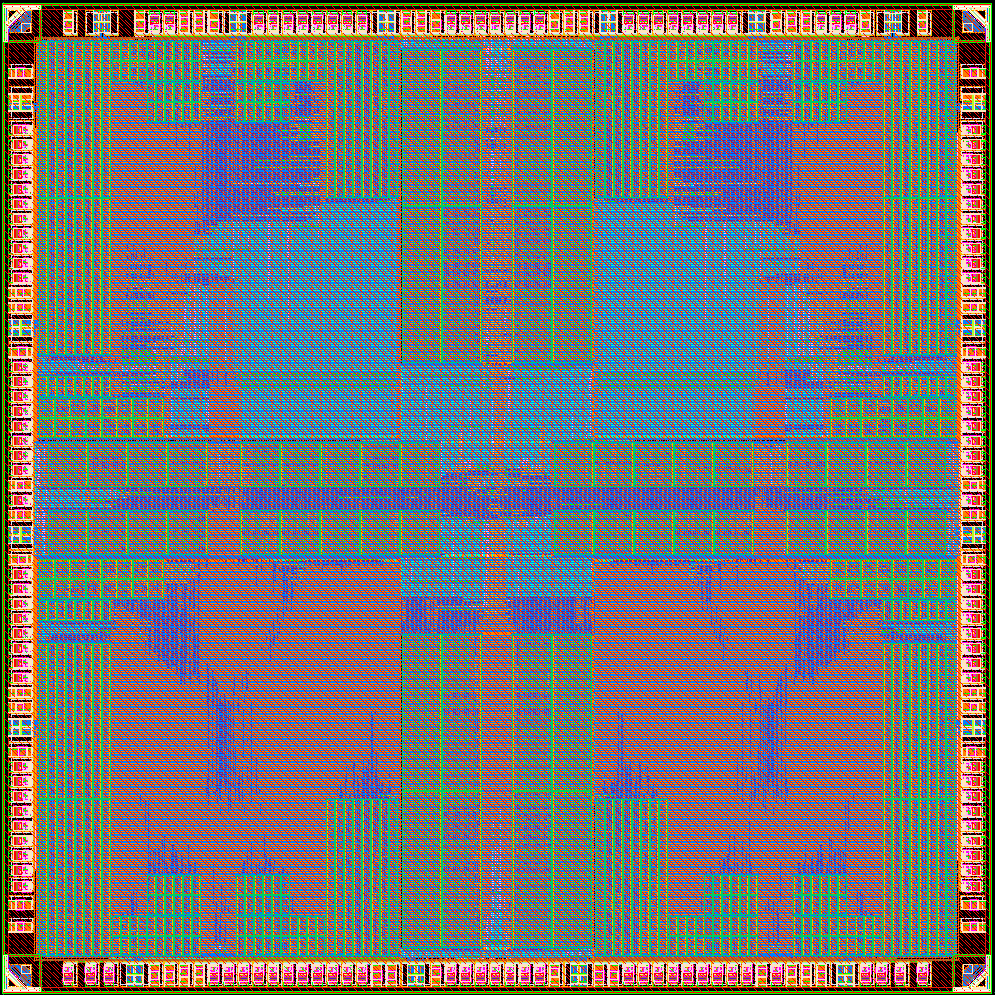

This paper presents a new NPU designed to realize cloud-edge-device co-acceleration ecosystem. Unlike conventional NPUs, which were limited to inference, the proposed processor supports AI training and designed with scalable architecture to be utilized in both edge nodes and mobile devices. Moreover, the proposed NPU can support cloud-edge-device co-inference and co-training scenarios by introducing numerous features using hardware-software co-optimizations. The processor incorporates a novel main core to accelerate both inference and training of convolutional layer or attention layer, a peripheral core for specialized tensor operations, and a memory management unit for efficient off-chip data handling. The 4 mm × 4 mm chip targets highest throughput and highest energy efficiency compared with the conventional state-of-the-art inference or training processors. Moreover, the fabricated chip will demonstrate two scalable applications, from mobile devices to edge nodes, using single-chip and multi-chip boards, respectively. |